Andrew Ferguson on Twitter: "Anyone have any experience good or bad running PyTorch on the new Apple M1 chip?" / Twitter

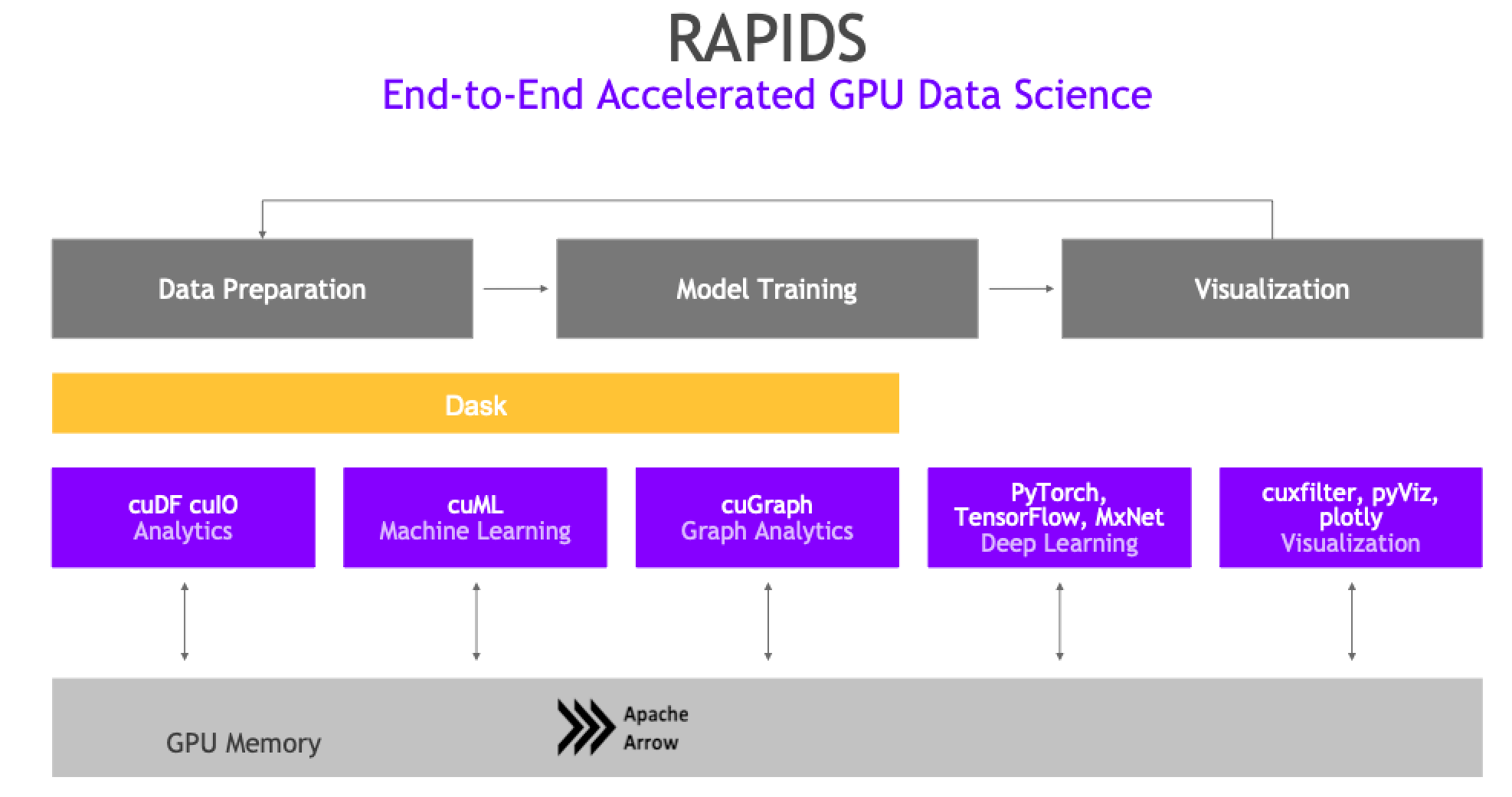

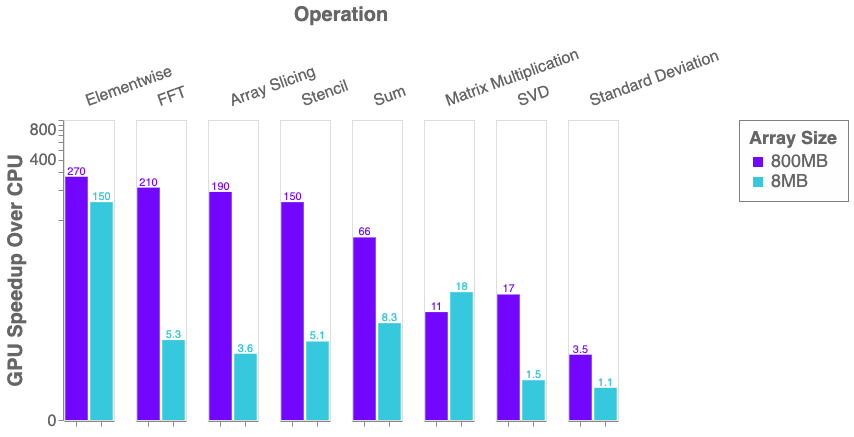

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

Pytorch realizes GPU acceleration of Apple M1 chip: the training speed is improved by 7 times and the maximum performance is improved by 21 times

GitHub - vvestman/pytorch-ivectors: GPU accelerated implementation of i-vector extractor training using PyTorch. Requires Kaldi for feature extraction and UBM training. An example script is provided for VoxCeleb data.

GitHub - zylo117/pytorch-gpu-macosx: Tensors and Dynamic neural networks in Python with strong GPU acceleration. Adapted to MAC OSX with Nvidia CUDA GPU supports.

Pytorch announced that it supports GPU acceleration of Apple M1 chip: training is 6 times faster and reasoning is 21 times faster

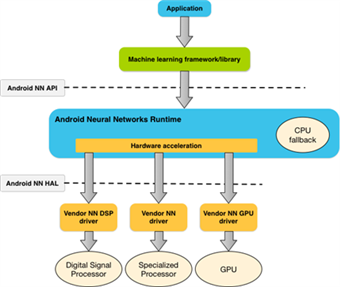

Improve PyTorch App Performance with Android NNAPI Support - AI and ML blog - Arm Community blogs - Arm Community

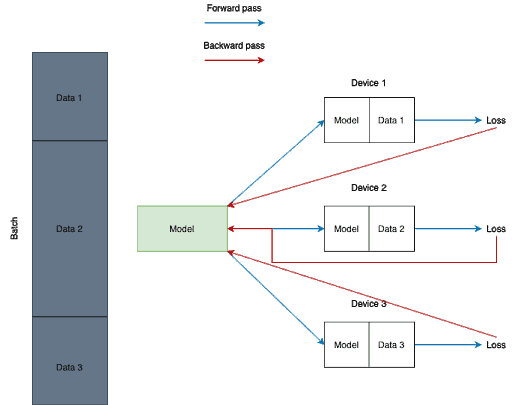

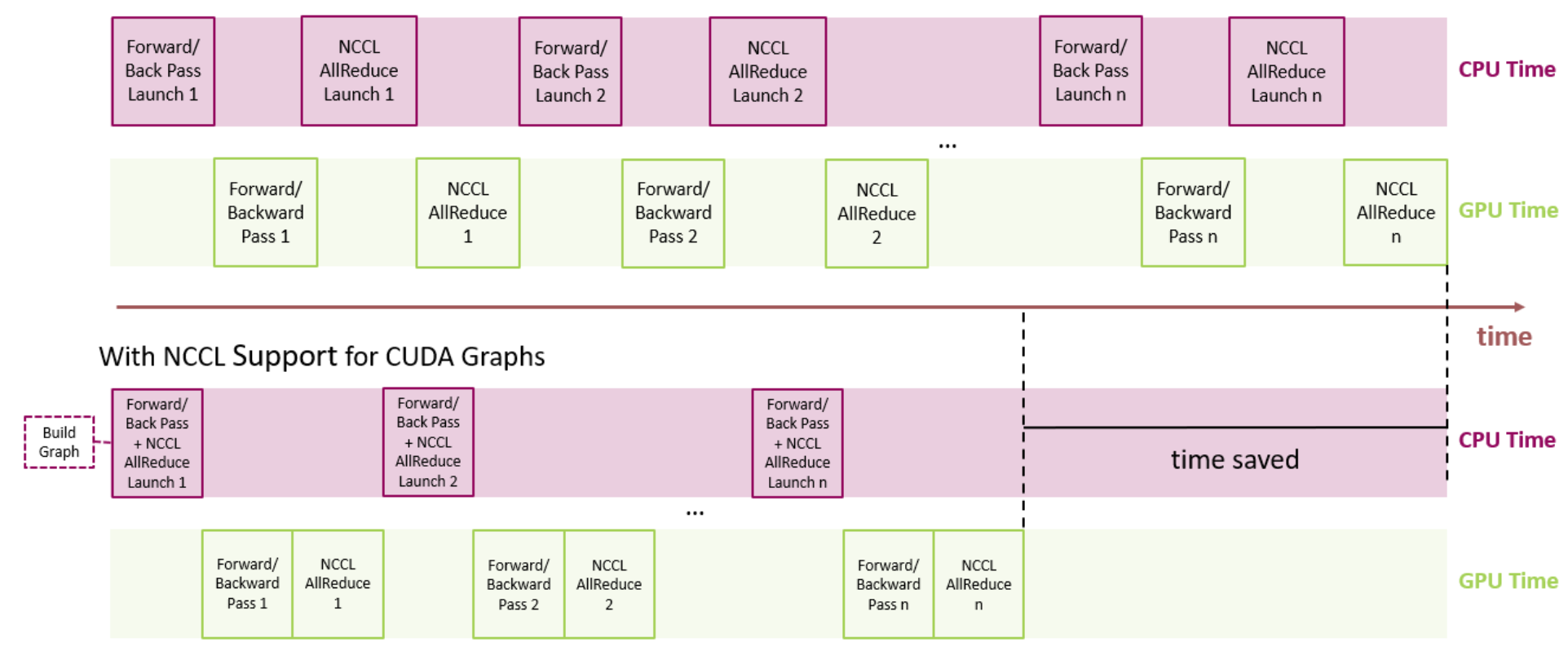

MONAI v0.3 brings GPU acceleration through Auto Mixed Precision (AMP), Distributed Data Parallelism (DDP), and new network architectures | by MONAI Medical Open Network for AI | PyTorch | Medium

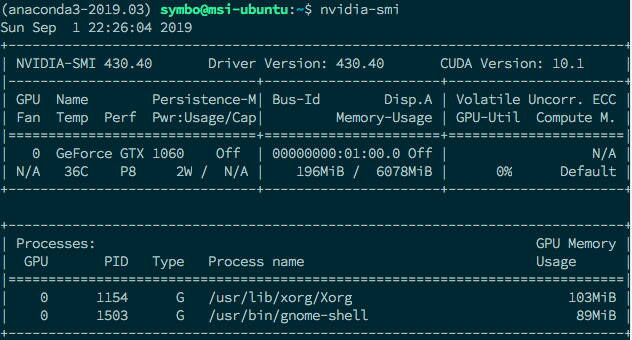

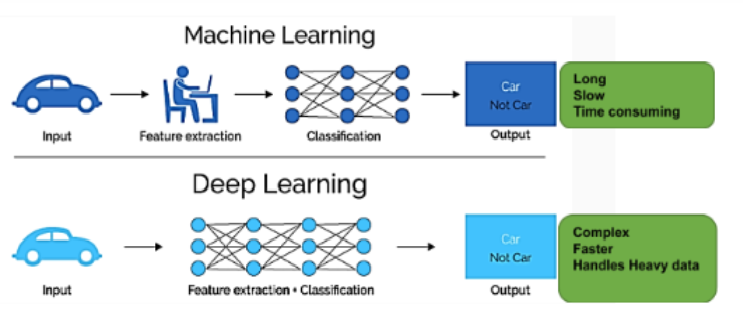

Learning MNIST with GPU Acceleration - A Step by Step PyTorch Tutorial - Make Your Own Neural Network